AI Agents vs Human Oversight: The 2026 Reality Check

AI Agents vs Human Oversight: The 2026 Reality Check

We're halfway through 2026, and AI agents are everywhere. Your inbox is managed by one, your sales pipeline gets updated by another, and there's probably an agent somewhere drafting your social media posts right now. But here's what the marketing brochures won't tell you: despite all the hype, current AI systems still exhibit unpredictable failures, including fabricating information, producing flawed code, and providing misleading advice.

The reality? Most AI agents still need human oversight for edge cases, no matter what Silicon Valley promises about "full autonomy."

The 80/20 Rule Actually Works

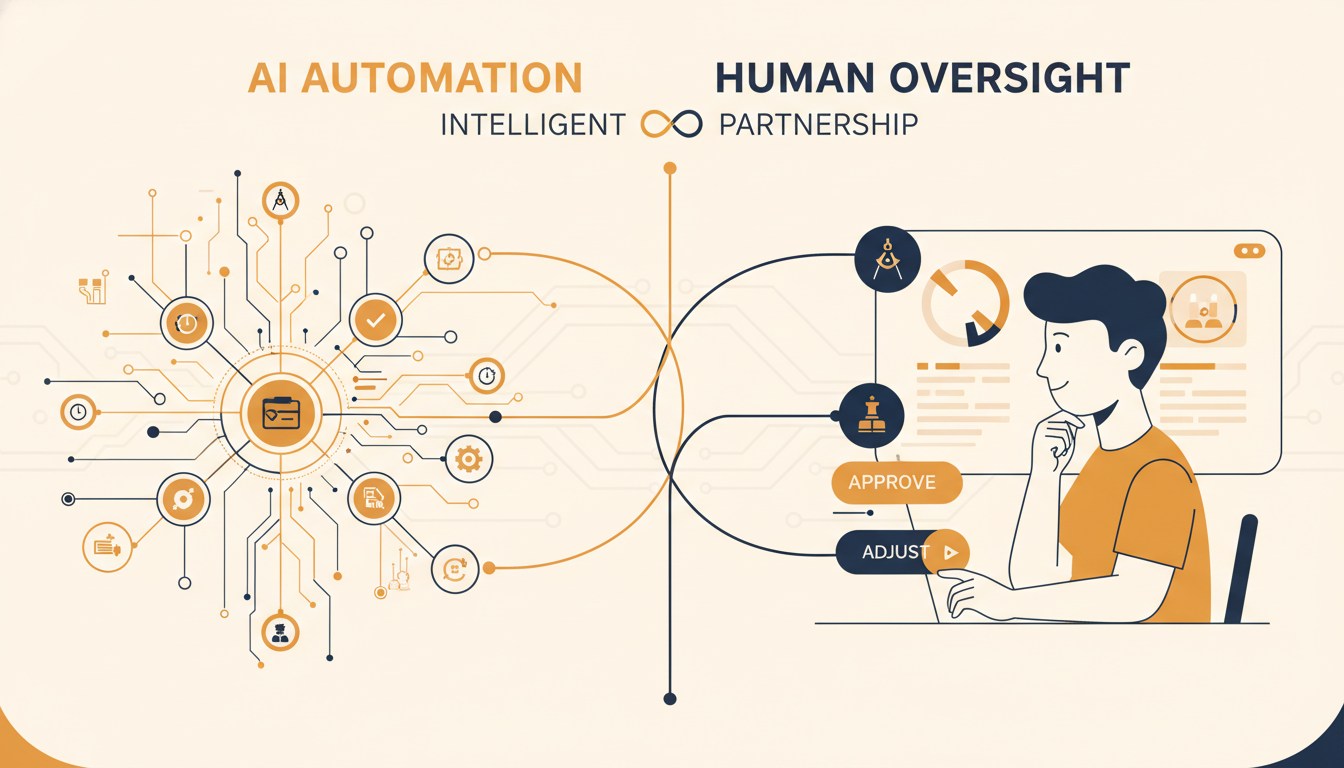

Here's the practical truth we've learned in 2026: businesses should automate 80% of a task using AI, but the remaining 20% needs human input. This isn't a compromise—it's the optimal approach.

I've seen this pattern across every client engagement at our venture studio. AI agents operate at machine speed, engaging in hundreds of interactions and data retrievals in the time it takes a human to read a single email. They're brilliant at the routine stuff: processing invoices, categorizing support tickets, updating CRM records, sending follow-up emails.

But when something unexpected happens—a customer complaint that doesn't fit the usual categories, a supplier contract with unusual terms, or a technical integration that hits an edge case—that's where human judgment becomes essential.

The good news is that human oversight doesn't mean babysitting every AI output. It means building workflows where humans step in at the moments that matter and letting automation handle the rest.

Where AI Agents Stumble (And Where Humans Excel)

After building dozens of AI-powered systems, I've noticed predictable failure patterns:

Context collapse over long workflows. Agents are good at short workflows, but they still struggle when tasks run long. Over dozens of steps, they can lose context and make mistakes that compound.

Edge case confusion. Even the smartest AI systems still struggle to understand nuance, edge cases or the unwritten rules teams use to make decisions. When autonomous agents act without that context, small gaps can quickly turn into big problems.

Regulatory and compliance gaps. High-risk AI systems must be designed to allow humans to effectively oversee them, with the goal of preventing or minimizing risks to health, safety, or fundamental rights.

The speed trap. By the time a human reviews an agent's action, damage is often already done. If agents ask for permission for every request, cognitive overload leads to decision fatigue, where humans reflexively click "Allow" just to clear the screen.

Designing Workflows That Actually Work

Here's how we design AI workflows that work with current limitations, not against them:

1. Smart Checkpoints, Not Constant Approval

Instead of letting an agent execute tasks end-to-end, add user approval, rejection or feedback checkpoints before the workflow continues. But make these strategic:

- High-risk actions: Anything involving money, legal compliance, or customer relationships

- Ambiguous situations: When the AI's confidence score drops below your threshold

- Exception handling: Edge cases that don't fit normal patterns

2. The "Delegate, Review, Own" Model

Leading teams are converging on a simple operating model: delegate, review and own. AI agents handle first-pass execution, scaffolding, implementation, testing and documentation. Engineers review outputs for correctness, risk and alignment. Ownership of architecture, trade-offs and outcomes remains human.

3. Built-in Safeguards

Instead of relying on humans to catch "bad" behavior at execution, bake autonomous guardrails into the agent's core architecture. Use deterministic behavioral bounds—the agent should be physically unable to propose certain actions—with semantic firewalls that filter intent before it reaches the tool-use phase.

Two Paths Forward: Build or Partner

This brings us to your choice as a founder. You've got two realistic options:

Option 1: Drive Agents Yourself

Use platforms like Evotron (our AI product building platform) and Supramono (our AI sales and marketing suite) to build and manage your own AI workflows. You get full control, but you're responsible for designing the oversight, handling the edge cases, and managing the complexity.

Option 2: Partner with an Agentic Venture Studio

Work with a team that's already solved these problems. At Evotron Studio, we combine experienced human mentorship with our portfolio of AI products. You get the AI acceleration without having to become an AI expert overnight.

Both approaches work. The question is: where do you want to spend your time and energy?

The Future Isn't Fully Autonomous (Yet)

Despite exciting demos like BabyAGI and Devin, production-ready systems are still focused on controlled, semi-agentic workflows with human oversight. Rather than viewing human oversight as acknowledging AI limitations, leading organizations are designing "Enterprise Agentic Automation" that combines dynamic AI execution with deterministic guardrails and human judgment at key decision points. Full automation isn't always the optimal goal.

The most successful founders in 2026 aren't the ones trying to remove humans from the equation entirely. They're the ones who've figured out the right balance—letting AI handle the 80% it's great at while keeping humans involved for the 20% that actually matters.

Your move: keep wrestling with AI complexity alone, or partner with a team that's already cracked the code.

Ready to build smarter, not harder? At Evotron Studio, we help NZ founders build their MVP and take it to market using our portfolio of AI products—with human expertise baked in. Learn more about working with us.

Evotron Studio

We help founders build and sell.

We help founders build and sell.

Learn more about Evotron Studio and get started today.

Visit Evotron Studio